Understanding Crawl Frequency For SEO: A Comprehensive Guide

Posted by Surgeon’s Advisor

Google’s ability to provide users with up-to-date information from the web relies on Google Crawl Frequency, a crucial element of SEO. This determines how frequently Google’s web crawlers visit your website to index and update its content, significantly impacting your site’s visibility and performance in search results. In this article, we’ll explore the fundamentals of Google Crawl Frequency, its influence on SEO, and the key factors affecting it.

Understanding Google Crawl Frequency

Google Crawl Frequency is an important factor in SEO, as it determines how often search engines (such as Google) send web crawlers to index and update a website’s content. It is based on a variety of factors, including the size of your website, its content, server capacity, load speed, crawl issues, and request rate. Your website’s crawling frequency can be monitored using Google Search Console or through the use of a search bar. To ensure regular updates to your website and to avoid any crawl issues, you should make sure to check and update your content regularly.

In addition to the above factors, other elements that influence crawling frequency include search query volumes, dead links or 404 error codes on your pages, server load capacity, and mobile device compatibility. By ensuring that your site has high-quality backlinks from authoritative websites at the root level of your domain and by optimizing page loading times for users across all devices you can further improve your chances of achieving regular crawling frequency from search engine bots. The period between crawls may range from a couple of days up to several months depending on user agent requests and other factors affecting crawling frequency.

Factors Influencing Crawl Frequency

Crawl frequency is an important factor in SEO as it determines how often search engine bots visit your website to index and update its content. Knowing the factors that influence crawl frequency is essential to ensure that your website is properly indexed and updated. Take into account these elements and make sure to optimize them so you can ensure that your site has a regular and efficacy crawling frequency from search engines.

Crawl Rate Limit

Google’s crawl rate limit is one of the primary factors that influence crawling frequency. This limit sets the maximum number of requests a single web crawler can send to a website during any given period, ensuring indexing efficacy and efficient content rank in the biggest search engine. The crawl rate limit also determines the status of each crawl request, as it indicates how frequently Google’s primary crawler will visit your domain.

Website Health

Website health is also an important factor in determining crawl frequency. By keeping track of your website’s crawl stats, you can detect any connectivity errors or crawl status issues that may lead to a decrease in crawling frequency. Additionally, you should ensure that your content remains fresh and up-to-date to improve its chances of being successfully indexed by search engines. This will result in quicker crawling times and a higher average response time from crawlers. Furthermore, resolving indexing issues can help speed up the process of search engine indexing and increase the likelihood of regular crawling frequency.

Website Popularity

Website popularity is also a major factor in determining crawl frequency. Websites with greater levels of user engagement and active content tend to be more likely to receive regular crawls from Google’s bots due to the increased load on their servers, as search engine algorithms prioritize websites that perform well in terms of the indexing process. Additionally, websites that are hosted on performant servers will experience faster crawling and higher average response time from web crawlers, resulting in regular crawls at the expected level.

XML Sitemaps and Site Structure

XML sitemaps and site structure can also have a significant impact on the frequency of crawls. By submitting your XML sitemap to Google Search Console, you can inform search engine algorithms about the location of your website’s pages and content. Furthermore, log file analysis can help identify crawl issues and enable you to implement solutions that will make sure your website receives frequent crawls from Google’s bots. Additionally, clean site architecture with well-structured URLs helps decrease the load on servers as well as average page response time when crawling large websites, ensuring regular indexing by search engine algorithms after an algorithm update.

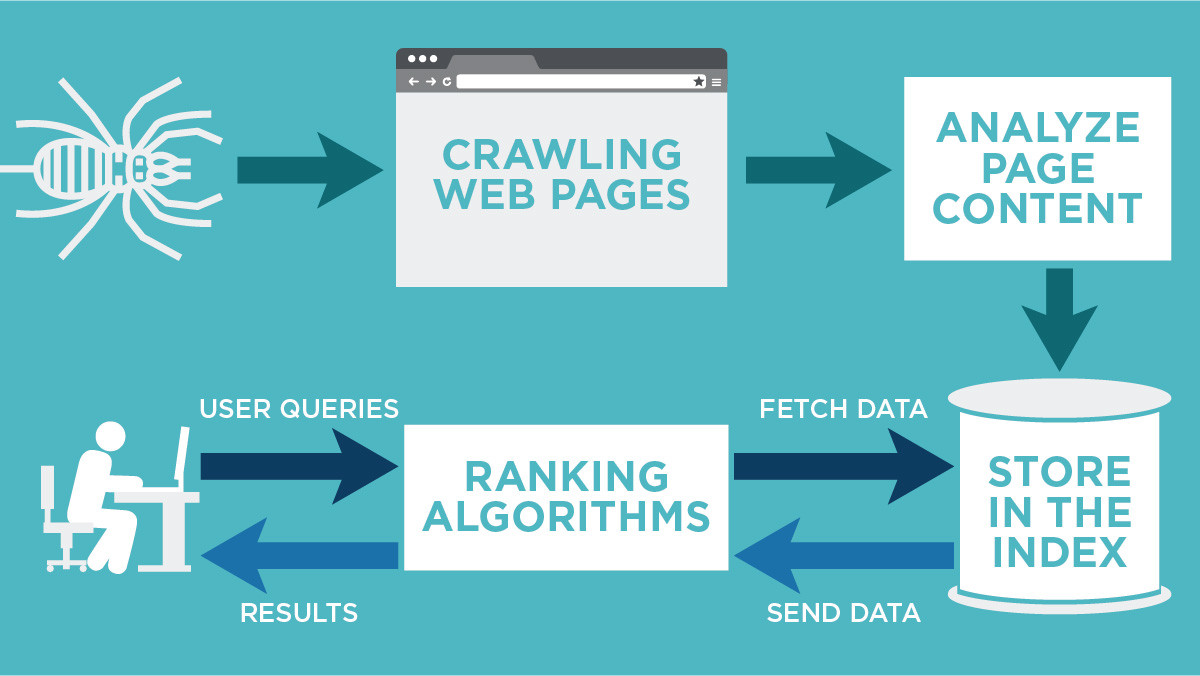

Exploring Google’s Web Crawling Process

Google’s web crawling process begins with a list of URLs from past crawls and sitemaps provided by website owners. Algorithms decide which sites to crawl, the crawl frequency, and the number of pages to fetch, known as the crawl budget. The process prioritizes frequently updated or recently modified pages, factoring in site popularity, link structures, and content updates.

Google’s robots.txt file can restrict access to certain parts of your site, while server errors may disrupt crawling. After crawling, content is indexed based on keywords and relevant information, ensuring accurate and up-to-date search results.

The Importance of Crawl Frequency for SEO

Search engine bots are central to SEO, scanning the web, visiting websites, and indexing new content. High crawl frequency correlates with better SEO performance. Frequent crawling signifies that your site offers fresh content, improving search rankings. Frequent crawling enhances user experience by providing up-to-date, valuable information in search results. Google aims to connect users with relevant content, making frequent indexing crucial for effective SEO.

Boosting Crawl Frequency: Emphasizing Quality Content

Regularly updating your website increases crawl frequency. Fresh content signals relevance and value to search engines. Content like news articles, blogs, and forums require frequent updates to remain relevant. Even static pages benefit from occasional updates. Quality content is crucial, as frequent updates shouldn’t compromise substance. Experiment with updated schedules and monitor search rankings to find the right balance.

Crawl Frequency and Site Links: The Connection

The quantity and quality of links on your website influence crawl frequency. Internal links aid navigation for users and crawlers, promoting rapid indexing. Outbound or external links contribute to the crawl rate by indicating content relevance and quality. Backlinks and inbound links play a significant role in crawl frequency. Broken links can hinder crawl frequency and should be promptly fixed.

Understanding Crawl Errors: Impacts on Crawl Frequency

Crawl errors can disrupt a search engine’s crawl rate. They affect the accuracy of crawl statistics, resulting in lower crawl rates. Server errors, often caused by high traffic, and URL errors, often from broken or missing web pages, can impede crawling. Timely resolution of crawl errors is essential for maintaining crawl rates.

Leveraging Google Search Console for Crawl Frequency Insights

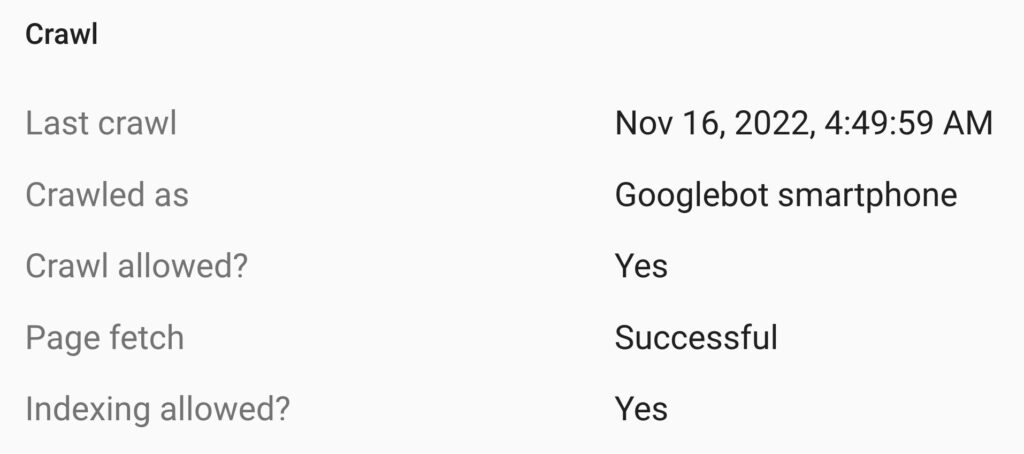

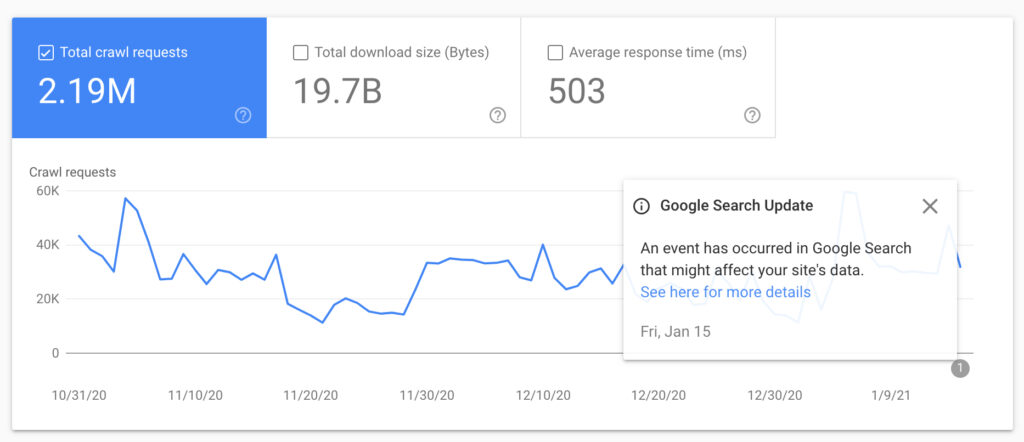

Google Search Console provides insights into crawl statistics, helping webmasters and SEO practitioners monitor crawl frequency. The ‘URL inspection tool’ offers detailed information about each URL’s recent crawling events, aiding in-depth exploration of crawl frequency. Crawl frequency directly impacts how quickly site updates appear in search results, making Google Search Console a valuable resource for understanding and enhancing crawl statistics.

Optimizing Your Server for Improved Crawl Frequency

Server performance, response speed, and error handling influence crawl frequency. A fast server results in a higher crawl speed. A well-performing server can handle multiple requests without issues, increasing indexing speed. Balancing server speed and crawl frequency is crucial, as excessively fast crawling can overload the server. Optimize for both speed and resilience under high crawl rates.

Crawl Frequency and Domain Authority

Website domain authority significantly affects crawl frequency. Sites with higher domain authority are crawled more frequently as they are perceived as authoritative. Lower authority sites receive smaller crawl budgets, leading to fewer visits and less frequent indexing. Improving domain authority enhances crawl frequency and SEO, although it requires consistent effort and time.

Monitoring Crawl Frequency for Ongoing Updates and Fixes

Regular monitoring of crawl frequency is essential to maintain optimal site performance. Google Search Console’s URL Inspection tool and Search Console provide insights into crawl frequency. Maintaining a healthy crawl budget and monitoring crawl frequency enhance user-friendliness and search result rankings.

Summarizing Crawl Frequency for SEO

Google Crawl Frequency is a critical aspect of SEO, influencing search engine bots’ visits to your site for content indexing. Understanding, monitoring, and optimizing crawl frequency are essential for improved search result rankings. The strategies discussed here can lead to enhanced crawl frequency, better rankings, and a more effective SEO strategy. If you need professional assistance with crawl frequency and SEO, consider consulting an expert for personalized guidance and expertise.